In Future ML Systems Will Be Qualitatively Different, I argued that we should expect ML systems to exhibit emergent capabilities. My main support for this was four historical examples of emergence in ML.

In some sense, extrapolating from only four data points is pretty sketchy. It's hard to tell if these examples are the start of a trend, or cherry-picked to tell a story. In low-data regimes like this, it's helpful to have a prior, and so I'll spend this appendix looking at related fields of science and examining how common emergent behavior is in those fields.

In short, my conclusion is that emergence is very common throughout the sciences, and especially common in biology, which I view to be most analogous to ML. As such, I think it should be our default expectation for ML, and the four data points from before primarily serve to confirm this. Personally, forming this "prior" updated my views about as much as the (combined) historical examples that I previously discussed for ML.

More Is Different Across Domains

Recall that emergence refers to the idea that More Is Different: that quantitative changes can lead to qualitatively different phenomena. While this idea was first articulated by the physicist Philip Anderson, it occurs in many other domains as well:[1]

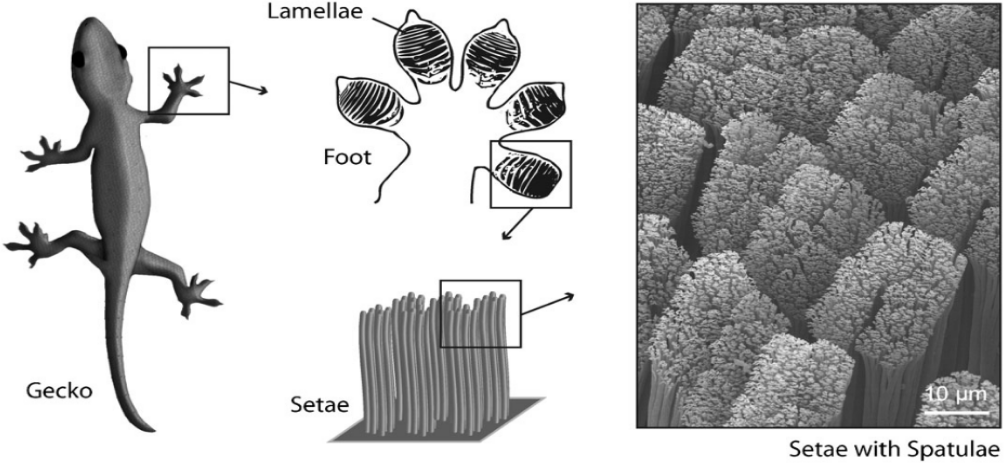

- Biology: Gecko feet are covered with tiny structures called spatulae (shown above), consisting of many keratin molecules woven together. Spatulae are responsible for geckos’ ability to walk up walls, but it’s clear that we couldn’t "scale down" the structure and retain the same ability---you need enough keratin to form the complex bristles shown above.

- Physics: Siloles (a type of molecule) are not luminescent individually, but become luminescent when put together in aggregate. This is because the aggregate is more rigid, reducing intramolecular motion that otherwise inhibits the production of photons.

- Computers: Operating systems only arose once computers became fast enough. Before that, individual programs took long enough that task management could be done by hand. Greater speed both produced the demand for automation and the capacity to support an operating system’s overhead.

- Economics: Increased population size enables increased specialization. Hunter-gatherer bands could not support smiths and architects. In the modern world, the emergence of Ford and other mass producers required a large enough consumer market to invest in large factories, standardization, and so on.

For a broadly accessible introduction to emergence, I recommend this video by Kurzgesagt. For a slightly longer treatment, a reader also recommended this other video.

Zooming In On Biology

Among the physical sciences, biology is the domain that seems most analogous to machine learning. While analogies between artificial and biological neurons are overwrought, these two fields share genuine, fundamental similarities. Both study objects that are shaped by complex optimization processes (evolution for biology, gradient descent for ML). The objects are composed of simple well-understood parts, but they are difficult to understand in aggregate because optimization puts the parts together in complex ways. Progress in ML benefits from Moore's law, while biological understanding benefits from the Carlson curve for DNA sequencing (and earlier from a Moore-like law for X-ray crystallography).

Biology is also the domain where More Is Different holds most strongly. In biology, macromolecules (e.g. proteins) have complex structures that cannot exist in small molecules, and which support important functions. For instance, hemoglobin transports oxygen efficiently due to a nonlinear dissociation curve that wouldn't be possible with small molecules.

One level up, protein polymers again unlock new structure and function, such as actin microfilaments forming the cytoskeleton. Muscles further combine actin microfilaments into fibres that can contract, enabling controlled motion.

In a different direction, long DNA macromolecules allow the transfer of genetic information. Smaller molecules simply don't have enough states to reliably encode a genome.

Finally, complex organs such as eyes need a huge number of cells in even their simplest form--fruit flies, whose visual resolution is near the minimum to detect objects, have eyes with 16,000 cells. Even among eyes, different numbers of cells (and hence different visual acuities) lead to qualitative differences. Spiders create patterns in their webs that birds can see (and thus avoid, to mutual benefit) but that insects cannot.

Assorted Additional Examples

Here are some additional examples of emergent behavior, some of them more ambiguous than others:

- Ants. A small number of ants will wander around and starve to death, but a large number of ants forms a complex self-sustaining colony.

- Internet. ARPANET connected a few hundred hosts and was a platform for research. The world-wide-web connects a billion hosts and has transformative effects on daily life.

- Transistors. A few transistors lets you build a radio. With 170 transistors you can build an ALU, and with thousands you can build a microprocessor.

- Neurons. It is plausible that the main neurophysiological difference between humans and other primates is having more neurons, rather than any fundamental difference in how the neurons are organized.

- Cities. Cities are more than groups of people: they also have events, infrastructure, governance, and culture.

- Life. A cell is alive, but if you separate out the individual elements they are not alive.

- Qubits. A 50-bit quantum computer is a curiosity, but a 20 megabit quantum computer could break the world’s encryption.

- Practice. If you practice a skill a little bit, you get a bit more proficient. If you practice it a lot, it becomes “chunked” in your brain and can be used to build further abstractions.

Counterarguments and Conclusion

Emergent behavior occurs, at the very least, in biology, physics, economics, and computer science. In biology, it occurs ubiquitously: for DNA, for hemoglobin, for muscles, for eyes.

In Empirical Findings Generalize Surprisingly Far, I further argued that empirical findings often generalize even in the presence of these emergent phenomena, citing examples in biology. But does this hold for other emergent domains, such as physics and economics? For physics, the answer is a clear yes, because of physical symmetries and conservation laws. Economics, on the other hand, is a field where external validity is notoriously difficult to come by.

Which of these is a better reference class for machine learning? Economics, like biology and machine learning, studies complex systems (humans and economies). However, it is also an area where controlled experimentation is difficult and sometimes impossible. In machine learning, it is easy to run controlled experiments---far easier than biology or physics.

I personally think that rapid controlled experimentation is the key to uncovering lawlike behavior, and that in this sense ML is more like biology and physics than economics. If we could experiment on economies as easily as we could on neural networks, I think we would have a much better understanding of general economic laws. But I could be wrong. I think one strong indicator will be whether the emerging field of science of ML[2] uncovers important robust phenomena in the next five years. If it does, and those trends survive repeated phase transitions, then we’ll have objectively evaluable evidence about whether empirical phenomena in ML can successfully generalize. I’m personally optimistic, but ultimately time will tell.

The introductory post to the series included several additional examples: DNA, uranium, water, traffic, and specialization. ↩︎

It’s possible that Science of ML won’t be the subfield to discover these phenomena. For instance, they might instead be discovered through work on interpretability or by people trying to engineer better systems. I'd still count that case as a "success" for my prediction. ↩︎